According to the latest IBM Cost of a Data Breach Report 2026, 97% of AI-related security breaches involved AI systems that lacked proper access controls. While 74% of companies plan to deploy agentic AI within the next two years, only 21% report currently having a mature model for governance of autonomous agents. These gaps become exponentially wider with Shadow AI.

The era of Shadow IT has evolved. In 2026, the primary threat to your enterprise perimeter isn’t just an unapproved SaaS app—it’s Shadow AI. While security teams focus on official AI portals, developers are spinning up private Model Context Protocol (MCP) servers on AWS instances and connecting third-party tools—all without a single ticket hitting the security desk.

In most organizations, the security team doesn’t even know half of these agents or where they exist. This is the new “next-gen workforce,” and it’s the fastest-growing attack surface in enterprise security.

The Reality: Shadow AI on Your Cloud Platforms

While many organizations are worried about “unknown” rogue agents, we’ve found a recurring irony among our customers: They lack visibility into the AI services they are already running on their primary cloud platforms.Even when a cloud platform is officially sanctioned (like AWS, Azure, or GCP), IT and Security teams are often flying blind. They don’t know who launched which agent, which humans have access to them, or what internal resources those agents can touch. This creates a state of “Shadow AI within the Cloud”—trusted environments hosting completely ungoverned, invisible agents.

The data confirms this isn’t an edge case. Gartner predicts that 40% of enterprises will face security incidents from shadow AI by 2030, and that 40% of enterprise apps will embed task-specific AI agents by the end of 2026—up from under 5% today. Forrester’s AEGIS framework warns that “few, if any, security controls or control planes exist for agentic AI.” The governance tooling simply hasn’t caught up to the deployment velocity.

The New Anatomy of Shadow AI

Unlike a rogue SaaS app that sits idle, Shadow AI is “agentic”—it actively calls APIs and moves data autonomously. In 2026, Shadow AI manifests in three dangerous ways:

- Private MCP Servers & Agents on AWS: Developers often launch lightweight MCP servers or AI Agents on EC2 or within private VPCs to bypass corporate filters. These servers act as gateways, giving AI agents direct, unmonitored access to internal databases and AWS S3 buckets.

- Public MCP & Tool Sprawl: Beyond the server itself, agents use public MCP servers to call external tools—like a Jira MCP call to update tickets or a GitHub tool to push code—often using long-lived credentials that never expire.

- Shadow AI Connections & Silent Permissions: Employees are increasingly connecting “AI Productivity Bots” to corporate Slack or Google Workspace. These agents can inherit broad scopes (like files.read or mail.send) that remain active 24/7 without a human in the loop.

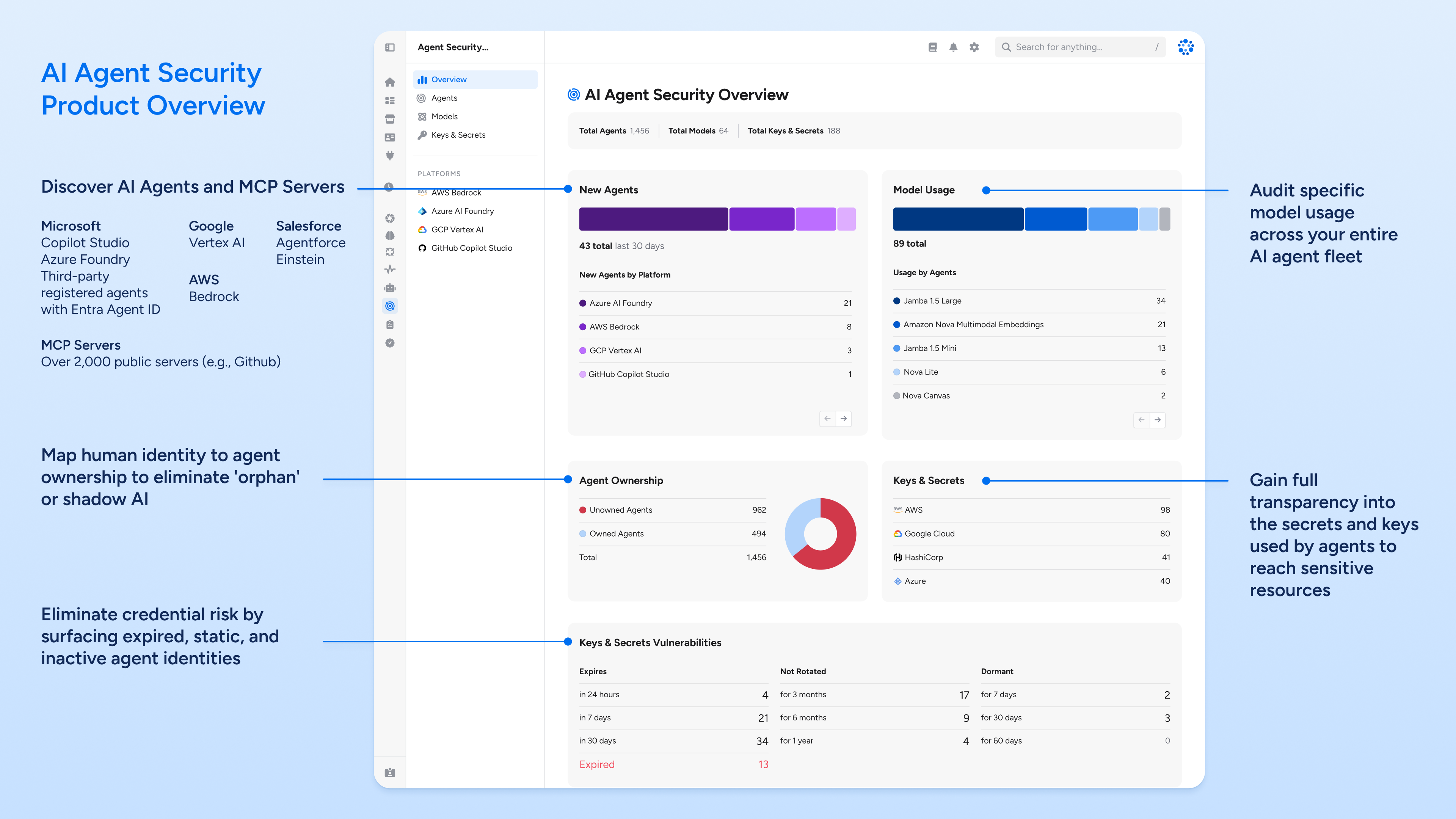

Phase 1: Complete Discovery (Public, Private, & Beyond)

You cannot govern what you cannot see. Veza’s Access Graph now extends deep into the infrastructure layer to find where AI agents are hiding.

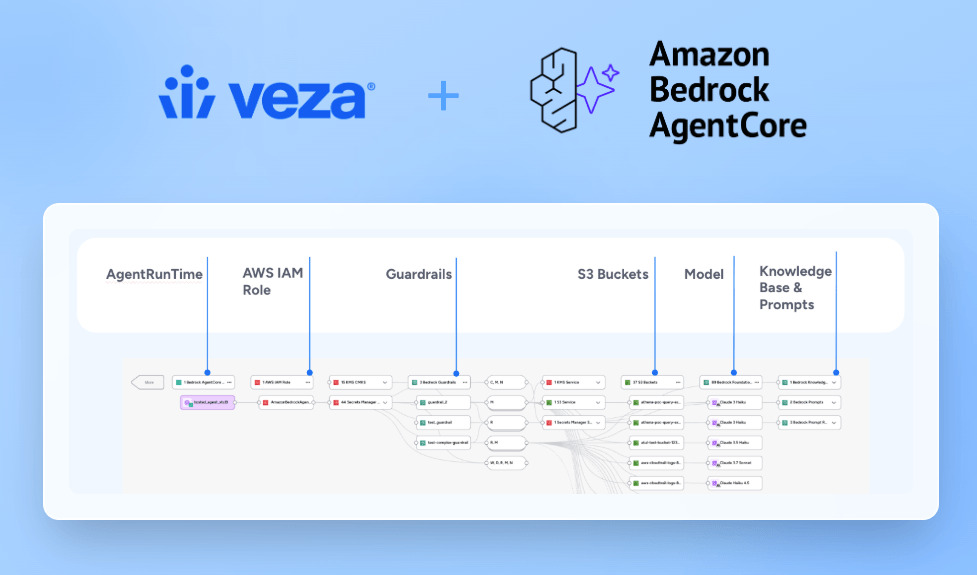

- Unmask Agents in Cloud AI Services: Veza scans your cloud platforms to discover agents that are otherwise invisible to security. It surfaces AWS Bedrock agents whether launched through AWS AgentCore or Bedrock, showing you exactly who built them, what tools they use, and what data they can touch.

- Detect Private MCP Servers on AWS: Veza scans your AWS environment to identify service accounts and IAM roles associated with AI workloads. It flags private MCP servers launched on EC2 or Lambda that have been granted high-privilege access to sensitive data stores like Redshift or S3.

- Discover Tools Beyond the MCP Server: Veza doesn’t just see the “front door” of an MCP server. It identifies the granular tools the agent is actually using—such as specific API tool calls to Jira, GitHub, or internal CRM systems.

- Audit Agent Permissions and Tool Access: For AI agents that access internal tools and resources through a centralized authorization layer, Veza inventories the granted scopes and surfaces overprivileged combinations — such as agents with both read access to sensitive data and the ability to write or exfiltrate to external destinations. Beyond the authorization layer, Veza maps the full tool surface: which MCP servers each agent connects to, and which specific tools — like code push, ticket updates, or database queries — that agents can invoke.

Phase 2: Solving the Ownership Crisis

Shadow AI thrives on anonymity. One of the highest-risk findings in any environment is an unowned AI agent.

- The Suggested Owner Agent: When a developer spins up a private MCP server on AWS and later moves teams, that agent becomes an “orphaned identity.” Veza’s Suggested Owner Agent automatically maps these unmanaged identities back to the most likely responsible human owner based on activity and department metadata.

- Establish Accountability: By assigning a human “pilot” to every “co-pilot,” you ensure that every automated action is tied to a real person who can be held accountable during an audit.

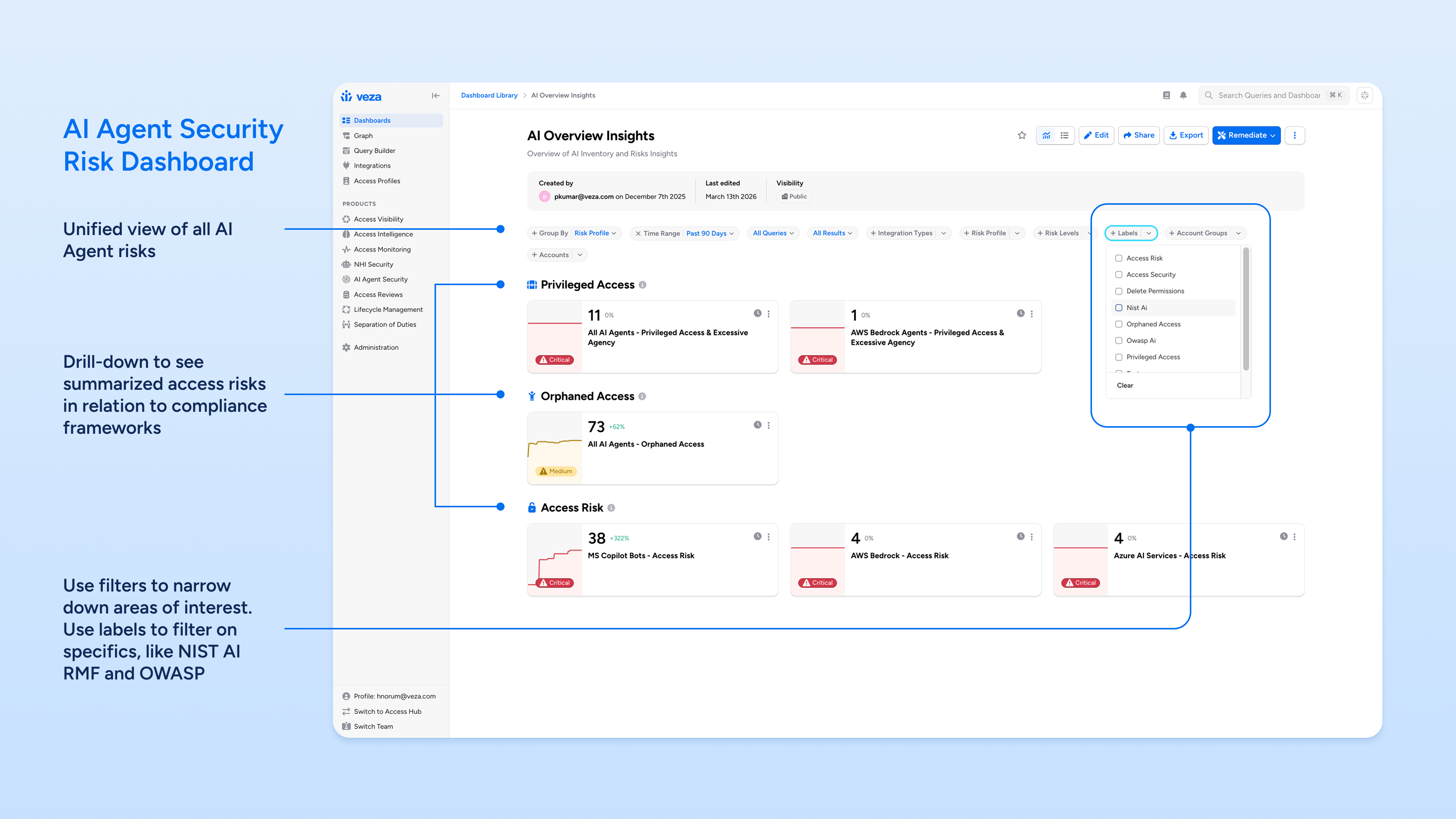

Phase 3: Governance via the AI Agent Access Security Dashboard

To move from discovery to sustained governance, Veza provides an Out-Of-The-Box AI Agent Access Security Dashboard. This dashboard is specifically built to simplify executive oversight and is pre-mapped to industry-standard frameworks:

- Mapping to NIST AI Risk Management Framework (AI RMF) & OWASP for Shadow AI: Every AI risk is automatically tagged and mapped to specific controls:

- Orphaned AI Access (OWASP LLM03 / NIST GOVERN): Targets shadow agents with no human owner, ensuring no agent operates without accountability.

- AI Access Risks (OWASP LLM08 / NIST MAP): Visualizes unauthorized access risks and potential data exfiltration points.

- Privileged AI Access (OWASP LLM06 / NIST GOVERN): Identifies agents with “Excessive Agency,” preventing bots from having unnecessary write/delete permissions on production data.

The Power of Permissions for AI Governance

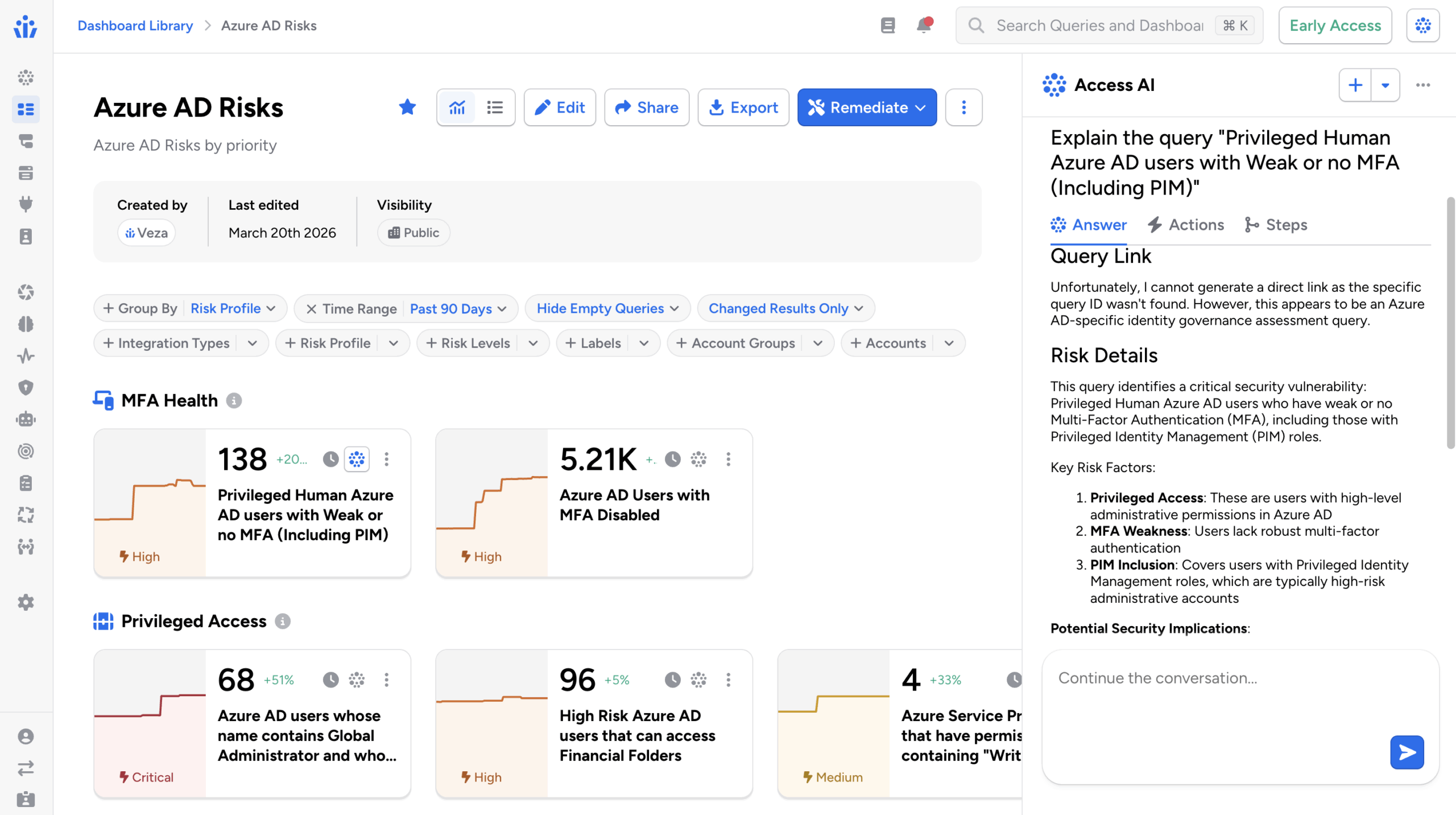

Because AI agents often assume the roles of the humans authorizing them, you cannot secure AI in a vacuum. You need a single platform that understands the identity of the human first.

Veza provides the full, uninterrupted visibility and access paths:

Human User → AI Agent → MCP Server → Tools/Functions → Data Resources.

By having visibility into the human-to-resource relationship first, and then layering AI agent discovery on top, you gain a complete map of who is truly responsible for an action—and what that action can touch.

Your AI Agent Security Roadmap Summary

| Milestone | Action Item | Feature |

|---|---|---|

| Week 1 | Scan cloud AI and AI platforms for managed/unmanaged MCP servers & shadow AI agents. | AI Agent Security Discovery |

| Week 2 | NIST and OWASP-based discovery of orphaned access and high-risk bot scopes. | AI Agent Access Security Dashboard |

| Month 1 | Map all private AWS agents to human owners. | Suggested Owner Agent |

| Month 2 | Flag agents with “Excessive Agency” and >5 tools. | Blast Radius Visualization |

| Ongoing | Automate remediation of drift and orphaned agents. | Veza Actions |

Conclusion: AI Governance Needs Visibility, Intelligence, Monitoring, and Controls

In the agentic era, “I think we’re secure” is a dangerous gamble. Identity is your new perimeter, and with Gartner predicting that over 50% of all enterprise data access will be initiated by autonomous agents rather than human users by the end of 2026, you can no longer afford to fly blind.

Whether your developers are building on rogue AWS instances or hiding agents inside “approved” cloud platforms, the risk is the same: ungoverned access is a backdoor to your most sensitive data.

Talk to us. Let us show you the AI Agent Security in action and find your shadow AI before an attacker does.